Structuring research narratives

The story you tell about your research is the research. The narrative you share with the research community is how your findings are understood by others; without a story, there is no research.

Research papers are the most common medium for presenting research stories, but talks, posters, and hallway conversations all involve making choices about how to structure your research narrative.

Learning to structure research narratives doesn’t come naturally, and it’s one of the things you’ll learn during a PhD. Many researchers learn by imitating the structure of existing abstracts or Introduction sections, but I find it’s really useful to have a rough framework in mind. What follows is the framework I use, as taught to me by Mike Wittie during a 2012 REU program. I would think about this framework like the five-paragraph essay: a structure that helps you produce a reasonable argument, but overly restrictive once you get more experience structuring research narratives.

A framework for structuring research narratives

The framework has four components:

- Societal Problem

- Technical Problem

- Technical Solution

- How the Solution Helps Society

Societal problem

The societal problem tells us how the world is lacking.

The society in question can be as broad as you choose, from all of humanity to a small group of researchers, engineers, or practitioners trying to accomplish something.

A problem must – in principle – be solvable.

The problem should be as specific as you can make it for the audience you have in mind. “Food insecurity exists” is a societal problem, but “food distribution networks cannot meet existing needs” is more appropriate if the research you conducted is specific to food distribution.

Technical problem

The technical problem is why we can’t just fix the societal problem.

The technical problem is the domain-specific problem contained within the broader problem that is amenable to attack by our discipline’s methods.

Resolving the technical problem should recognizably move us closer to solving the societal problem.

The line between the societal problem and the technical problem is necessarily fuzzy. In general, you should start with a problem recognizable to any member of your audience and take argumentative steps toward a concrete technical problem specific to your discipline.

The societal problem and technical problem together are your strongest motivations for conducting this research. There may be other motivations that you mention incidentally or while discussing how your research relates to prior work, but lead with your strongest argument and ensure that addressing the technical problem clearly addresses the societal problem.

Technical solution

The technical solution is how our research fixes the identified technical problem.

Your solution should correspond clearly to the points you raised in the technical problem. If your technical solution seems to respond to a broader or more narrow problem than your technical problem suggests, that’s a good indicator that you should rework the technical problem or consider presenting less of the work.

For in-progress work, it works fine for the technical solution to be tentative or incomplete; by explicitly characterizing the technical problem you’re trying to solve, you make it obvious where the holes are in your solution.

It can be appropriate for the technical solution to include only a brief teaser of your research work. In longer formats, it is fine to include additional details about the methods you used and your results alongside the technical solution.

How the solution helps society

How the solution helps society draws us back from the specifics of your solution to the new state of the societal problem following your research.

If you discussed specific results while describing your technical solution, you should link those results to specific changes for researchers or practitioners who are facing the same societal problem.

Describing the societal benefit can be very brief, but you must do it: remind your reader where you started, or you’ll be left with an incomplete narrative.

Arguments to avoid

Choosing an appropriate societal problem and technical problem is important because these communicate your core motivations for conducting the research. Unconvincing or vague motivations will leave your audience confused about the potential value of your research.

Here are two common ‘bad’ arguments to avoid:

“X is hard”

Why haven’t we fixed the societal or technical problem yet? Well, because they’re hard problems!

Consider this argument:

Societal problem Lack of access to food causes human misery. Food distribution networks can provide access, but designing and implementing these networks is challenging. Technical problem Networks need stable supply chain data to distribute food. Technical solution We propose a new method for collecting and structuring supply chain data. How the solution helps society Our method enables food distribution networks to operate more efficiently and so increase access to food.

Instead, we should be more specific about why these problems are challenging.

Societal problem Food distribution networks can provide access to food, but designing effective networks depends on access to stable supply chain data. Technical problem Suppliers provide data in diverse formats or not at all. Technical solution We propose a new method for collecting and structuring supply chain data. How the solution helps society Our method enables food distribution networks to operate more efficiently and so increase access to food.

Sharpening the societal problem and specifying a concrete cause forced us to be more specific about the technical problem we actually solved.

When you feel tempted to make an “X is hard” argument, that’s usually a sign that your argument is too high-level. That can be okay for an elevator pitch, but you can make a stronger argument by being specific about what is hard.

Research gaps

One common piece of advice is to identify a “research gap”: a research question that hasn’t yet been addressed in the published literature.

However, research gaps make for ineffective motivations.

As Casey Fiesler writes on Bluesky:

I would love to never see the phrase “little is known about” in a paper ever again.

(1) Most of the time the authors are far more confident about that statement than they should be.

(2) There are lots of things we know little about because no one cares. Just not knowing isn’t a good motivation.

Fiesler is correct: “there’s a gap” is not a compelling justification for doing research. It is good to notice when there are gaps, but you should first ask:

Why is there a gap?

Use the answer to that question as your technical problem instead.

Example: My first paper

Let’s look at the abstract from my first paper and see how well I followed this framework.

The paper was called “Bridging Qualitative and Quantitative Methods for User Modeling: Tracing Cancer Patient Behavior in an Online Health Community”, and it proposed a new method for researchers to use when constructing quantitative user models. This is a hard test for this framework, because the contribution – and thus my motivations – were very conceptual.

Here’s the abstract, with annotations added:

Societal problem Researchers construct models of social media users to understand human behavior and deliver improved digital services. Such models use conceptual categories arranged in a taxonomy to classify unstructured user text data. Technical problem In many contexts, useful taxonomies can be defined via the incorporation of qualitative findings, a mixed-methods approach that offers the ability to create qualitatively-informed user models. But operationalizing taxonomies from the themes described in qualitative work is non-trivial and has received little explicit focus. Technical solution We propose a process and explore challenges bridging qualitative themes to user models, for both operationalization of themes to taxonomies and the use of these taxonomies in constructing classification models. Method detailsFor classification of new data, we compare common keyword-based approaches to machine learning models. We demonstrate our process through an example in the health domain, constructing two user models tracing cancer patient experience over time in an online health community. How the solution helps society We identify patterns in the model outputs for describing the longitudinal experience of cancer patients and reflect on the use of this process in future research.

Overall, I think this abstract is okay.

- My societal problem is expressed very implicitly. That can be okay, but I could consider an explicit frame instead: “Models of social media users without valid conceptual categories can produce misunderstandings and lead to ineffective designs.”

- The technical problem uses both of the arguments to avoid: I say the process is hard and that there’s a research gap! Instead, I should be specific: “But operationalizing taxonomies from the themes described in qualitative work requires a process that can bridge from the thematic to the concrete.”

- My description of the technical solution is vague, although I think that’s mostly okay in an abstract.

- My description of how the solution helps society is too vague. I should tie it back to the problems I identified more explicitly: “Our process creates quantitative user models that are both useful and valid.”

If I had used this framework more explicitly, I would have produced a stronger abstract! Here’s a revised version:

Societal problem Quantitative models of social media users without valid conceptual categories can produce misunderstandings and lead to ineffective designs. Technical problem In many contexts, useful category taxonomies can be defined via the incorporation of qualitative findings, a mixed-methods approach that offers the ability to create qualitatively-informed user models. But operationalizing taxonomies from the themes described in qualitative work requires a process that can bridge from the thematic to the concrete. Technical solution We propose a process and explore challenges bridging qualitative themes to user models, for both operationalization of themes to taxonomies and the use of these taxonomies in constructing classification models. Method detailsFor classification of new data, we compare common keyword-based approaches to machine learning models. We demonstrate our process through an example in the health domain, constructing two user models tracing cancer patient experience over time in an online health community. How the solution helps society Our process produces user models with qualitatively-grounded categories that better capture user behavior and are more useful for researchers.

Using this framework

I try to use this framework as early as possible in a research project. I’ll write four high-level bullet points and see if I find the narrative compelling even without detail. Each month, I return to my bullet points, revising them to be more specific and to see how my thinking about the research has changed.

Research abstracts lend themselves well to this framework, since an abstract should usually serve as a brief summary of the research narrative. But abstracts can include other details as well (listings of results, calls to action, important related work), so I like to keep a “clean” version of the narrative for any research project I’m working on.

In longer formats, such as a research talk or an Introduction section, you might choose to include more or less detail in each component of this framework. It can be fine to start with a smaller “abstract” version of your research narrative – essentially functioning as a roadmap – or to introduce only the societal problem initially. Highly conceptual work might require significant exposition before the audience can even understand the technical problem. For example, many pure math talks are 80% “technical problem”, with a sliver of higher-level context at the start and a final theorem presented at the end, with details left to a longer-form write-up.

Other frameworks

There are many frameworks for structuring research narratives. While writing up this post, I stumbled on Maggie Appleton’s notes on “On Opening Essays, Conference Talks, and Jam Jars”, which uses a very similar narrative framing.

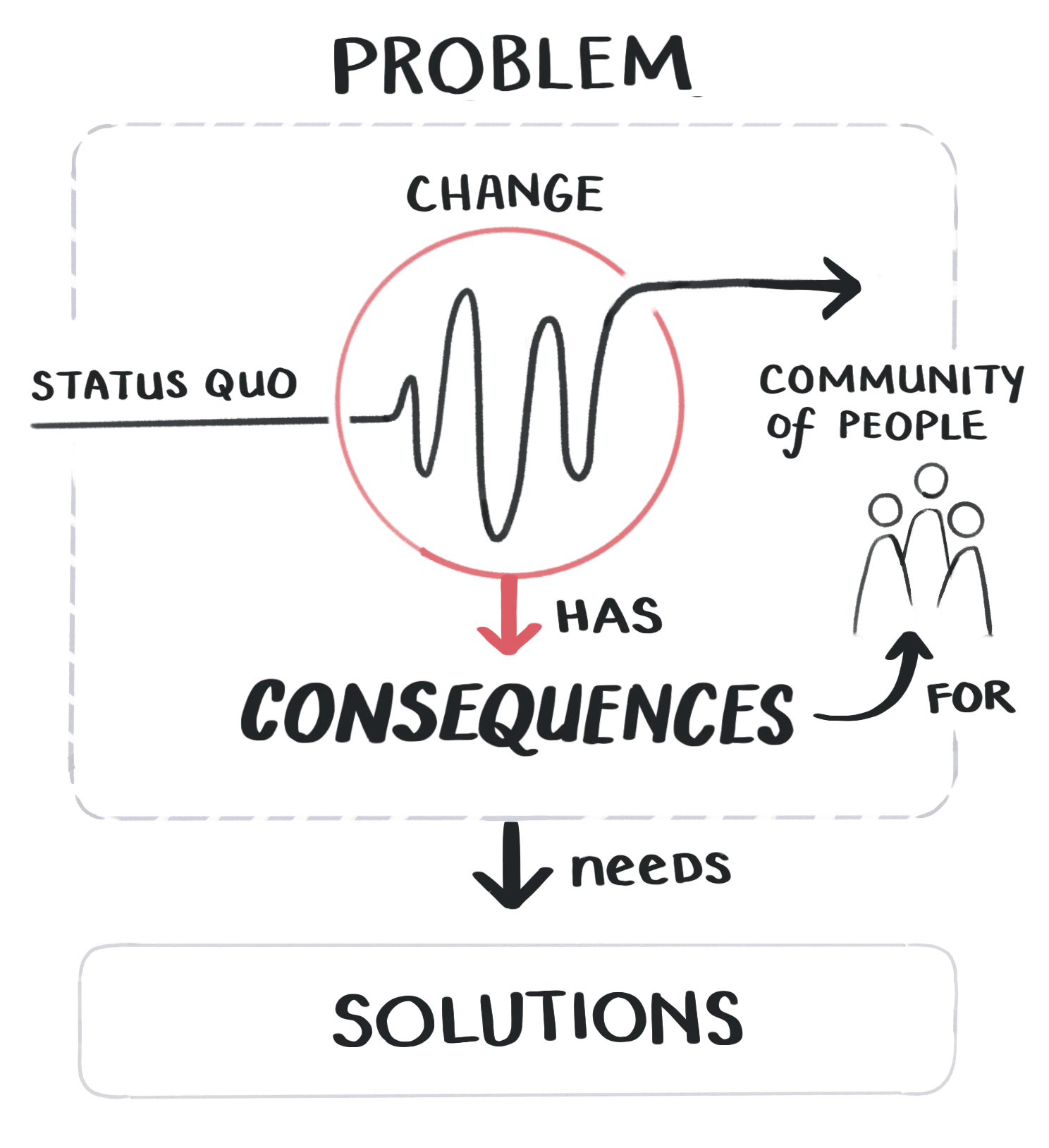

Maggie Appleton’s beautiful figure adapting Joseph Williams’ writing on problem structure.

Maggie Appleton’s beautiful figure adapting Joseph Williams’ writing on problem structure.

I was taught to write abstracts by John Carlis’ “Thinking about Abstracts”. He breaks down the story of a research abstract into a set of “sentence purposes”, and several of his examples strongly resemble the framework above. But Carlis makes an important point: there’s nothing sacred about a particular narrative structure. The framework above aligns with researcher expectations that are highly applicable to the fields I know well, but other fields can have very different expectations regarding research stories.

A good abstract tells a story. Furthermore, the parts of the story appear in a sensible order, one commonly seen by its (limited) audience. It, therefore, meets its readers’ expectations. When writing my own abstracts or helping others, I find that stating the purpose(s) of each sentence helps me see the story’s flow from a reader’s point of view, and readily find flaws in the story. If you think of an abstract as telling a story, then of course only some content and orderings are effective, and you can judge when content and order works or doesn’t.

When you start to diverge from the structure I present above, ask yourself: does this meet my readers’ expectations? Does my story ‘work’?

Other resources

- “10 tips for academic talks”. See the section on “Motivation” in particular.

- “Ten simple rules for structuring papers”

- Eugene Yan’s “Frequently Asked Questions about My Writing Process” – Includes a nice set of resources related to writing at the bottom of the post